Tough Math Problem Convinces Mathematician the Singularity Is Here

When Bartosz Naskręcki helped design the hardest tier of FrontierMath, the benchmark meant to expose AI’s inability to do real mathematics, he was confident that his problem would stand for years. He poured two decades of research expertise into crafting it, producing a 13-page solution that he believed would resist any machine attempt. As recently as mid-2025, he was publicly declaring that AI remained nothing more than a very advanced calculator, incapable of deep mathematical reasoning. That confidence evaporated this week, and the word he reached for to describe what happened was “singularity.”

GPT-5.4, OpenAI’s latest frontier model released on 5 March 2026, solved Naskręcki’s problem during Epoch AI’s evaluation runs, becoming the first AI system ever to crack it. The solution, in his own assessment, was clean and elegant, and he described it as feeling “almost human.” He called the experience his personal “Move 37 or more,” invoking the famous moment from 2016 when DeepMind’s AlphaGo played a move against Lee Sedol so creative that expert commentators initially thought it was a mistake, only to realise it was a stroke of genius no human had anticipated. For Naskręcki, the parallel was visceral: an algorithm had just demonstrated comprehension of a mathematical idea he had spent his career developing, performing at a level he considers on par with the top experts in the field.

From 2% to 50% in Sixteen Months

The trajectory that led to this moment has been vertiginous. When Epoch AI launched FrontierMath in late 2024, the best AI models could solve under 2% of its problems. The benchmark was deliberately constructed to be unsolvable by existing systems, featuring 350 original problems spanning undergraduate through research-level mathematics across number theory, algebraic geometry, topology, combinatorics, and mathematical analysis. Fields Medallist Terence Tao described the problems as “extremely challenging” and predicted they would remain beyond AI’s reach for the foreseeable future. His colleague Igor Pak suggested some might resist AI for up to 50 years.

That prediction aged poorly in a matter of months. By early 2026, the best models were solving over 40% of the Tier 1-3 problems (covering undergraduate through early postdoc-level mathematics). On the most brutally difficult Tier 4, which consists of 48 private research-level problems designed so that even a PhD specialist would need at least a month to figure out how to approach one, progress has been equally dramatic. When Epoch first evaluated the top models on Tier 4 in mid-2025, only three problems had ever been solved. By January 2026, that number had reached 17. Now, with GPT-5.4 Pro scoring 38% on Tier 4 and solving previously unsolved problems, 42% of all Tier 4 problems (20 out of 48) have been cracked at least once across all model runs.

The specific numbers from Epoch AI’s evaluation tell a striking story. GPT-5.4 Pro scored 50% on Tiers 1-3 and 38% on Tier 4. On the held-out problems (those that OpenAI has no access to and cannot train against), the model actually performed slightly better on Tier 4, solving 55% of the held-out set versus 25% of the non-held-out set, though Epoch notes that neither difference reaches statistical significance given the small sample sizes involved.

The Problem Author Becomes the Witness

What makes Naskręcki’s reaction particularly significant is who he is and what he previously represented. He is Vice-Dean of the Faculty of Mathematics and Computer Science at Adam Mickiewicz University in Poznań, a researcher at the Centre for Credible AI in Warsaw, and one of only five European mathematicians (and the sole Polish representative) invited to contribute to FrontierMath. His research spans arithmetic geometry, elliptic curves, and hypergeometric motives, and he has published extensively on topics including the generalised Fermat equation and divisibility sequences. He co-authored a paper with Ken Ono (one of the world’s leading number theorists) on mathematical discovery in the age of artificial intelligence.

When FrontierMath launched, Naskręcki was among its most quotable sceptics. He described AI as “like a very advanced calculator” that “can perform complex calculations, but does not understand deep mathematics.” He stated that “true mathematical reasoning requires creativity, intuition and the ability to connect seemingly unrelated concepts, something that machines are still unable to do.” He compared AI to “a hammer replacing an architect,” something that “can help with construction, but cannot design a house.”

The contrast between those statements and his reaction this week could hardly be sharper. Rather than doubling down on scepticism or qualifying the result with caveats, Naskręcki leaned fully into what had happened. The model, he explained to Epoch AI, had correctly solved his problem by extrapolating a pattern it noticed, which allowed it to avoid needing more advanced mathematical machinery. He characterised this not as a hack or a shortcut but as a genuinely impressive approach, given how the problem was specifically structured to yield a numeric final answer.

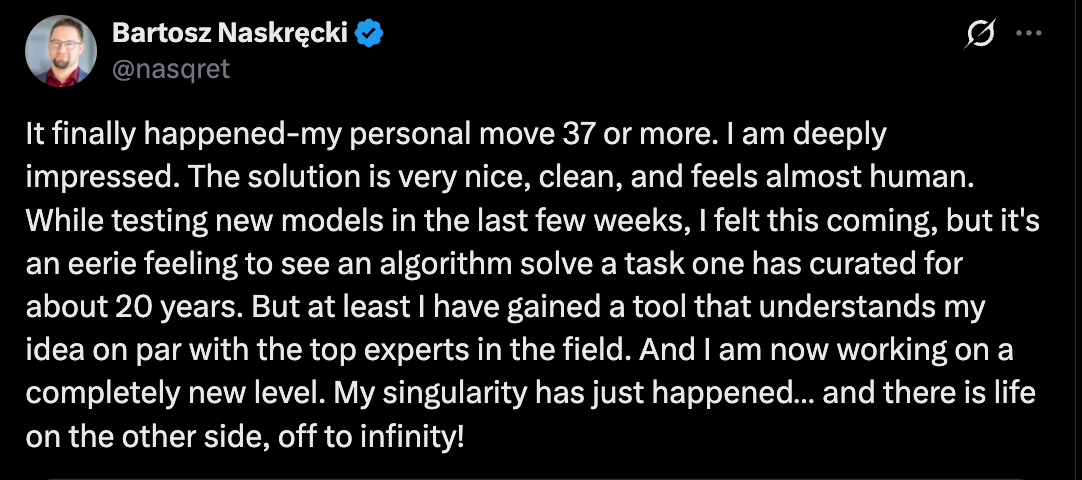

His tweet announcing the result was remarkable for its emotional candour. He described an “eerie feeling” watching an algorithm solve something he had curated for about 20 years, but immediately pivoted to the practical implication: he had gained a tool that understands his ideas at an expert level, enabling him to work on “a completely new level.” His closing line, “My singularity has just happened... and there is life on the other side, off to infinity!” carries an optimism that stands in deliberate contrast to the existential dread many academics express when confronting AI capabilities.

Contamination, Shortcuts, and the Credibility Question

One important caveat emerged from Epoch AI’s analysis. GPT-5.4 Pro also solved a different Tier 4 problem that no previous model had cracked, but preliminary investigation suggested the model had found a 2011 preprint that the original problem author was unaware of, effectively allowing it to shortcut much of the intended mathematical work. This is a recurring challenge for benchmarks: as models gain access to web search and can traverse the mathematical literature, the line between “genuine reasoning” and “sophisticated literature retrieval” becomes blurred.

For Naskręcki’s problem, however, this concern appears less applicable. FrontierMath problems are specifically designed to be original, with no existing solutions available online. The problem authors are instructed to create novel problems, and the answers are typically very large numbers designed to prevent lucky guessing. Naskręcki himself invested 15 years of accumulated expertise into his problem, and its documented solution runs to 13 dense pages of mathematics. That GPT-5.4 solved it through pattern extrapolation rather than literature retrieval makes the result more, not less, impressive from a reasoning standpoint.

It is also worth noting the structural conflict of interest in this benchmark. FrontierMath was funded by OpenAI, which has exclusive access to all 290 problems in Tiers 1-3 and solutions to 237 of them, plus 28 of the 48 Tier 4 problems and their solutions. Epoch retains the remainder as a held-out set. The fact that GPT-5.4 performed comparably (and in the case of Tier 4, slightly better) on the held-out problems it had no access to provides some reassurance against concerns about training contamination, but the arrangement nonetheless raises questions about benchmark independence that the field will need to address as stakes grow higher.

What Move 37 Really Means

Naskręcki’s choice of the “Move 37” metaphor deserves unpacking, because it captures something specific about the nature of this moment that broader AI hype tends to flatten. When AlphaGo played Move 37 against Lee Sedol in March 2016, it was not merely that the machine won the game. It was that the machine played a move of such creative brilliance that it expanded human understanding of Go itself. Professional players studied Move 37 not as a curiosity but as a genuine strategic insight, one that changed how the game was subsequently played at the highest levels.

Naskręcki is making an analogous claim about GPT-5.4’s mathematical solution. The model did not simply brute-force its way to a correct answer. It found an approach, extrapolating a pattern to avoid more advanced machinery, that Naskręcki himself finds mathematically interesting and legitimate. The AI has not merely matched human performance on a task designed to resist it; it has, in Naskręcki’s professional judgement, demonstrated something that looks like mathematical insight.

This is precisely the capability he said machines lacked less than a year ago. His willingness to publicly reverse that position, in real time and without equivocation, is itself a data point worth taking seriously.

“My Singularity Has Just Happened”

Two months before Naskręcki posted his tweet, Elon Musk made headlines with a pair of blunt declarations on X. On 4 January 2026, responding to Midjourney founder David Holz marvelling at how AI tools had let him complete more personal coding projects over a Christmas break than in the previous decade, Musk wrote: “We have entered the Singularity.” Hours later, he followed up with an even bolder timestamp: “2026 is the year of the Singularity.” The posts collectively drew over a million views and set off the predictable cycle of hype, backlash, and debate.

Musk’s claim was dramatic but diffuse. He was responding to engineers describing productivity gains, not to any specific scientific result. He offered no definition of what crossing the singularity threshold actually meant in measurable terms, and his track record of bold predictions (self-driving Teslas by 2020, a million robotaxis by 2020, humans on Mars by 2024) invites scepticism about timelines. The singularity, as Musk deployed it, functioned more as a rhetorical device than a falsifiable claim.

The word itself carries significant intellectual baggage. The technological singularity, as originally conceived by Vernor Vinge in 1993 and popularised by Ray Kurzweil in his 2005 book The Singularity Is Near, describes a hypothetical point at which artificial intelligence surpasses human intelligence and triggers runaway, self-reinforcing technological growth, a threshold beyond which prediction becomes impossible. Kurzweil famously placed it around 2045, though in his 2024 follow-up The Singularity Is Nearer he suggested AGI could arrive by 2029. The concept has always been contested, with philosopher Daniel Dennett dismissing it as “preposterous” and critics labelling it the “rapture of the nerds.” Yet the timeline keeps compressing. Musk now expects AI smarter than the smartest humans by 2026. Masayoshi Son predicted it within two to three years. Nvidia’s Jensen Huang gave it until 2029. Sam Altman has pointed to 2035. The range of predictions from industry leaders has narrowed dramatically, and the centre of gravity has shifted from “decades away” to “years away, possibly now.”

What makes Naskręcki’s use of the word different from Musk’s, and far more significant, is its specificity. He is not making a sweeping claim about civilisational transformation or the obsolescence of human labour. He is reporting something more concrete and more verifiable: that within his own field of expertise, in the precise mathematical territory he has spent 20 years cultivating, an AI system has crossed the threshold from tool to peer. The machine now comprehends his ideas at the level of the top experts in arithmetic geometry. For him, the event horizon has been crossed.

If Musk’s singularity is a billboard, Naskręcki’s is a lab result. And lab results, historically, are what actually change the world.

This distinction matters because the grand singularity narrative has always suffered from an abstraction problem. It is difficult to know what “superhuman intelligence” means in general, and the concept tends to dissolve into philosophical debate about consciousness, understanding, and the nature of mind. Naskręcki’s version sidesteps all of that. He is not making claims about machine consciousness. He is making a falsifiable empirical claim: GPT-5.4 solved a problem that required deep domain expertise, and it did so through a method he considers mathematically legitimate. The singularity, in his formulation, is not a metaphysical event. It is a professional reality, a concrete moment when the tools available to a working mathematician underwent a phase transition.

His observation that “there is life on the other side” is perhaps the most important part of the statement. The singularity discourse has long been dominated by two poles: utopian transcendence and existential catastrophe. Naskręcki offers a third possibility, one grounded in the lived experience of a domain expert rather than the speculations of futurists. On the other side of his personal singularity, he finds not obsolescence but amplification. He is working on “a completely new level,” not because the machine has replaced him but because it has become a collaborator capable of engaging with his ideas at the highest level. The mathematician is not redundant. The mathematician is augmented.

This is consistent with a pattern emerging across disciplines as AI capabilities advance. The professionals who engage most seriously with frontier models tend to report not displacement but transformation of their working methods. The nature of expert work shifts: away from execution, toward problem formulation, evaluation, and the generation of novel ideas that the models cannot yet produce independently. Naskręcki himself anticipated this shift in his earlier interviews, when he argued that “the last domain left for mathematicians will be coming up with new, crazy mathematical ideas.” The difference now is that he is living inside that transition rather than theorising about it from a comfortable distance.

The question this raises for the broader scientific community is whether Naskręcki’s personal singularity is an isolated event or a leading indicator. Mathematics is often regarded as the ideal domain for measuring AI progress because its step-by-step logic is easy to track and its answers are definitively verifiable. If the singularity arrives first in mathematics, where verification is unambiguous, it carries implications for every adjacent field. Cryptography, theoretical physics, computational biology, and quantitative finance all depend on the same genus of formal reasoning that GPT-5.4 has now demonstrated at research level. The ripple effects of what happened this week on FrontierMath Tier 4 may extend far beyond the world of arithmetic geometry.

The Acceleration Problem

The broader trajectory is what should command attention, and it lends unexpected credibility to Musk’s otherwise hyperbolic claim that 2026 is the year of the singularity. In September 2025, Naskręcki predicted AI would “saturate” FrontierMath within two to three years. Six months later, the benchmark is roughly half-solved on its hardest tier. If the current rate of progress continues, his prediction may prove conservative by a significant margin. When the man who designed the test to prove AI could not do real mathematics is now declaring his own personal singularity, the word starts to feel less like marketing and more like description.

The convergence of singularity talk in early 2026 is not coincidental. It reflects a real acceleration that is visible across multiple benchmarks and domains simultaneously. Google DeepMind’s experimental Aletheia system recently achieved publishable PhD-level results in arithmetic geometry. FrontierMath went from 2% to 50% solved in sixteen months. Coding benchmarks that were state-of-the-art challenges a year ago are now routinely saturated by new model releases. Musk called it a “supersonic tsunami” during a conversation at Tesla’s Gigafactory in January, and while his metaphors tend toward the cinematic, the underlying data is moving fast enough to make even sober analysts uncomfortable.

Epoch AI has already begun preparing for this eventuality. They have started developing FrontierMath: Open Problems, a collection of unsolved mathematics problems that have resisted serious attempts by professional mathematicians. GPT-5.4 Pro was evaluated on these and did not solve any, though it made some novel observations on one problem (observations the author characterised as relatively uninteresting and anticipated). This represents the next frontier: can AI move beyond solving difficult known problems to contributing genuinely new mathematical knowledge?

The answer matters enormously, not just for mathematics but for every field that depends on mathematical reasoning. If AI systems can reliably perform at expert level on research-grade mathematical problems, the implications extend across physics, cryptography, drug discovery, materials science, and virtually every domain where formal reasoning underpins progress. Naskręcki’s earlier observation remains pertinent even as its framing has shifted: the last domain for mathematicians may indeed be “coming up with new, crazy mathematical ideas,” but the boundary of what counts as “new” is moving faster than almost anyone anticipated.

For Naskręcki himself, the practical conclusion is characteristically pragmatic. He has not retreated into defensive scepticism or existential crisis. He has identified a new collaborator, one that understands his research at the level of the top experts in his field, and he is already working with it. There is, as he put it, life on the other side.

Whether 2026 ultimately earns the title Musk bestowed on it remains to be seen. The singularity, if it means anything concrete, probably does not arrive as a single moment but as an accumulation of moments like Naskręcki’s, domain by domain, expert by expert, each one marking the point where a human professional discovers that the machine now operates at their level. The mathematician who built the test to prove AI could not reason has just told us, with the credibility of someone who staked his professional reputation on the opposite conclusion, that it can. That is not hype. That is data.