Helios Deep Dive: 98-Qubit Trapped-Ion Quantum Computer Demonstrated

A look at the science behind the machine from Quantinuum

The race to build a truly useful quantum computer just took a giant leap forward, as researchers unveiled Helios, a system boasting an impressive 98 qubits—the fundamental units of quantum information. While still a prototype, Helios represents a significant milestone in trapped-ion technology, a leading approach to realizing scalable quantum processors, and edges closer to the threshold where these machines could potentially outperform classical computers for specific tasks. This achievement, detailed in a new study, isn’t just about raw qubit count, but also about the system’s increasing control and connectivity, bringing the promise of quantum solutions for fields like materials science, drug discovery, and advanced computation into sharper focus.

Helios: A 98-Qubit Trapped-Ion Quantum Computer

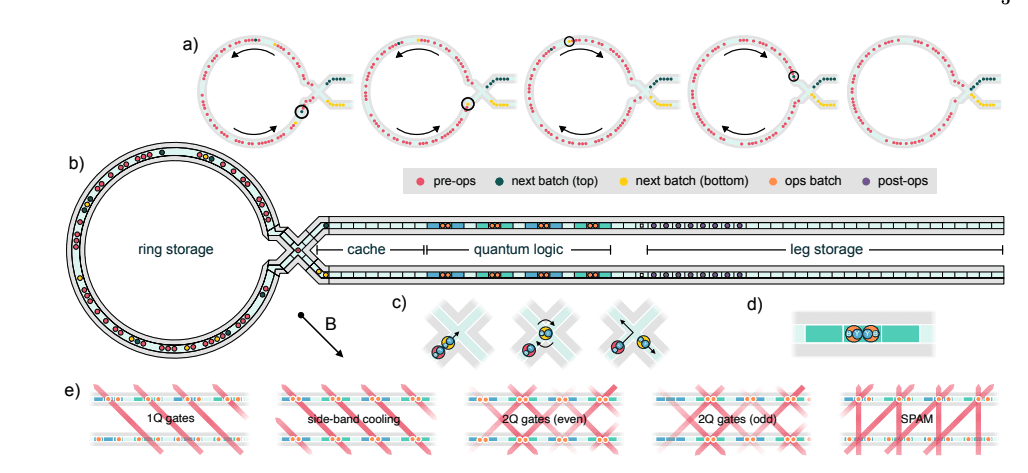

Utilizing barium-137 ions and the quantum charge-coupled device (QCCD) architecture, Quantinuum’s Helios is a 98-qubit trapped-ion quantum computer. This represents a significant leap in scaling, building upon previous 6-qubit QCCD systems. Helios employs a novel four-way “X” junction to connect ion storage (memory) and manipulation (logic) regions, streamlining connectivity without adding excessive control complexity. This design allows for all-to-all qubit connectivity, crucial for executing complex quantum algorithms.

Helios achieves impressive fidelity metrics. Averaged across the system, single-qubit gates demonstrate infidelities of 2.5 x 10-5, while two-qubit gates reach 7.9 x 10-4. State preparation and measurement also show low error rates at 4.8 x 10-4. Importantly, these aren’t fundamental limits – further improvements are anticipated. These high-fidelity components contribute to system-level performance exceeding the capabilities of classical simulation.

The system’s performance is bolstered by a new real-time classical control implementation. This allows dynamic compilation of quantum programs and facilitates efficient management of qubit transport and operations. Helios’ architecture and high-fidelity gates position it at the forefront of quantum computing, enabling exploration of increasingly complex algorithms and demonstrating significant progress toward fault-tolerant quantum computation.

Trapped-Ion QCCD Architecture and Scaling Challenges

Utilizing a trapped-ion Quantum CCD (QCCD) architecture prioritizing scalability through mobile qubit control, Quantinuum’s Helios enables a design for advanced quantum computation. Unlike stationary qubit systems requiring dedicated control hardware for each qubit, QCCD distributes resources – lasers for manipulation, cooling, and measurement – across processing zones. This minimizes complexity as qubit number increases, leveraging ion transport to move quantum information between optimized “memory” and “logic” regions. Helios’ design is roughly scaling in qubit number as fast or faster than solid state technologies, now boasting 98 qubits.

A key innovation in Helios is the implementation of a four-way “X” junction for connecting memory and logic zones. This junction efficiently routes ions without significantly increasing the complexity of electrical control or device fabrication – a critical advancement for scaling. By enabling flexible ion routing, the X junction avoids bottlenecks and facilitates all-to-all connectivity, allowing any qubit to interact with any other. This contrasts with architectures requiring more complex routing or limited qubit interactions.

Helios employs barium ions (¹³⁷Ba⁺) as qubits, demonstrating improved quantum operation error rates and a more scalable laser architecture compared to previous ytterbium-based systems. Combined with a new classical control implementation capable of real-time decision-making, Helios achieves impressive fidelity: 2.5 x 10⁻⁵ for single-qubit gates, 7.9 x 10⁻⁴ for two-qubit gates, and 4.8 x 10⁻⁴ for state preparation/measurement. These results demonstrate Helios’ capability to operate beyond the reach of classical simulation.

Benefits of Mobile Qubit Architectures

Mobile qubit architectures, like those employed in Quantinuum’s Helios (a 98-qubit trapped-ion system), offer distinct advantages in scaling quantum computers. Unlike stationary qubit designs where each qubit requires dedicated control infrastructure, mobile qubits – physically moved between processing zones – share resources. This reduces the multiplicative complexity of building larger systems, meaning fewer lasers and control lines are needed as qubit count increases, making scaling more efficient and potentially less expensive.

Helios leverages this mobile approach using a “quantum charge-coupled device” (QCCD) architecture and barium ions. The system utilizes a four-way junction to connect memory and logic regions, minimizing control complexity during qubit transport. This design contrasts with stationary qubit systems where delivering operations to each qubit becomes increasingly challenging as scale increases. Helios demonstrates roughly equivalent or faster qubit scaling compared to solid-state technologies, moving from 6 to 98 qubits in five years.

The benefits extend to performance. Helios achieves impressive component fidelities—single-qubit gate errors of 2.5 x 10-5 and two-qubit gate errors of 7.9 x 10-4—without fundamental limitations. This high fidelity, combined with the ability to distribute and share resources, enables complex computations beyond the reach of classical simulation, establishing a new benchmark for trapped-ion quantum computing and demonstrating the power of mobile qubit designs.

Helios System: Key Advancements & Design

Built on the quantum charge-coupled device (QCCD) architecture, Helios is Quantinuum’s 98-qubit trapped-ion quantum computer. A key innovation is the use of 137Ba+ ions as qubits, offering improved performance and scalability compared to previous ytterbium-based systems. Helios utilizes a unique four-way “X” junction to connect ion storage (memory) and manipulation (logic) regions efficiently, minimizing complexity in both electrical control and device fabrication – a critical step for scaling up qubit numbers.

The system achieves all-to-all qubit connectivity via a rotatable ion storage ring, allowing any qubit to interact with any other. This is coupled with parallelized operations and a new software stack capable of real-time compilation of dynamic programs. Quantitatively, single-qubit gate infidelities average 2.5 x 10^-5, while two-qubit gates reach 7.9 x 10^-4. These low error rates, combined with advancements in state preparation and measurement, demonstrate Helios’s substantial leap in performance.

These component-level fidelities translate to system-level performance exceeding classical simulation capabilities. Helios’s design facilitates efficient resource sharing, reducing complexity compared to stationary qubit architectures. The combination of barium ions, the X junction, parallelization, and the real-time software stack positions Helios as a frontier for complex quantum computations, demonstrating scalability alongside high fidelity—roughly keeping pace with, or exceeding, solid-state technologies.

Transition to Barium Ions for Improved Performance

Transitioning from ytterbium to barium ions (¹³⁷Ba⁺) as qubits, Quantinuum’s Helios represents a significant step forward. This change isn’t arbitrary; barium offers a more scalable laser architecture and demonstrably improved quantum operation error rates. Specifically, Helios achieves average single-qubit gate infidelities of 2.5 x 10⁻⁵ and two-qubit gate infidelities of 7.9 x 10⁻⁴ – crucial improvements for building larger, more reliable quantum processors. This move highlights a focus on optimizing the physical qubit itself for enhanced performance.

The architecture of Helios utilizes a four-way “X” junction to connect memory and logic regions. This design choice is key for scalability, avoiding increased electrical control complexity—a common challenge in expanding trapped-ion systems. By efficiently routing ions between zones, Helios enables all-to-all connectivity, meaning any qubit can interact with any other. This connectivity is essential for implementing complex quantum algorithms and maximizing computational flexibility.

Beyond hardware improvements, Helios benefits from a new classical control system capable of real-time decision-making. This allows for dynamic compilation and execution of arbitrary quantum programs, a departure from pre-programmed sequences. Coupled with the improved qubit fidelity and scalable architecture, Helios demonstrably operates beyond the reach of classical simulation and establishes a new benchmark for quantum computer performance and complexity with 98 qubits.

Efficient Ion Transport with the X Junction

Leveraging a unique “X” junction to efficiently move ions between quantum storage and operational regions, Helios, Quantinuum’s 98-qubit trapped-ion quantum computer, achieves high-performance quantum operations. This junction design is crucial for scalability, allowing connection between zones without increasing the complexity of electrical controls or trap fabrication – a key challenge in building larger quantum processors. By intelligently routing ions, Helios distributes computational resources and minimizes hardware duplication, a significant advantage over stationary-qubit architectures.

The X junction’s design is directly tied to Helios’s performance. The system utilizes 137Ba+ hyperfine qubits and achieves remarkably low gate infidelities: 2.5 x 10^-5 for single-qubit gates and 7.9 x 10^-4 for two-qubit gates. These values, achieved across all operational zones, are not fundamentally limited and suggest potential for further optimization. The efficient ion transport enabled by the junction contributes to maintaining qubit coherence during movement, essential for complex computations.

Beyond the hardware, Helios benefits from a new real-time compilation software stack. This allows for dynamic program execution and optimization, adapting to the system’s capabilities during runtime. Coupled with the efficient ion transport and high-fidelity gates, Helios demonstrably operates beyond the capabilities of classical simulation, marking a significant advancement in quantum computing complexity and paving the way for more powerful quantum algorithms.

Real-Time Compilation and Dynamic Program Control

Leveraging a unique architecture for scalability, Helios, Quantinuum’s 98-qubit trapped-ion quantum computer, utilizes trapped-ion technology. Unlike stationary qubit systems, Helios employs a quantum charge-coupled device (QCCD) – a mobile qubit design. This allows shared hardware resources, reducing complexity as qubit counts increase. Utilizing barium ions and a four-way junction, Helios efficiently connects memory and logic regions, minimizing control complexity—a crucial advancement for building larger, more powerful quantum processors.

A key innovation within Helios is its new classical control implementation. This system enables real-time compilation of dynamic programs. Instead of pre-defined instruction sequences, the control system dynamically adjusts operations based on qubit state and program requirements. This capability is vital for error mitigation and adapting to the inherent variability in quantum systems, allowing for more flexible and robust computation.

The real-time compilation and dynamic control translate to impressive performance metrics. Helios achieves average single-qubit gate infidelities of 2.5 x 10-5 and two-qubit gate infidelities of 7.9 x 10-4. These low error rates, combined with the system’s scale, demonstrate its ability to operate beyond the reach of classical simulation and represent a significant leap forward in quantum computing fidelity and complexity.

Single-Qubit Gate Infidelity Performance

Demonstrating exceptional single-qubit gate performance with average infidelities of 2, Helios is a 98-qubit trapped-ion quantum computer. 5(1) x 10-5. This fidelity is achieved using barium ions (137Ba+) as qubits, a choice made to improve error rates and scalability compared to previous ytterbium-based systems. Importantly, this level of control isn’t fundamentally limited, suggesting potential for further optimization. The system’s design, featuring a rotatable ion storage ring, contributes to all-to-all connectivity and efficient qubit manipulation.

The achievement of such low single-qubit gate infidelity is crucial for complex quantum computations. Lower error rates directly translate to longer coherence times and the ability to execute deeper circuits. Helios’ performance surpasses many existing quantum platforms, positioning it favorably for tackling problems intractable for classical computers. Furthermore, these component infidelities are predictive of strong overall system-level performance in both random Clifford circuits and random circuit sampling benchmarks.

Helios employs a novel four-way “X” junction to connect memory and logic regions, streamlining the architecture without increasing complexity. Combined with real-time compilation of dynamic programs, this allows for efficient execution of arbitrary quantum algorithms. The system’s design leverages hyperfine clock states and scalable micro-fabricated traps for transport control, demonstrating a robust and scalable approach to trapped-ion quantum computing.

Two-Qubit Gate Infidelity Performance

Demonstrating impressive gate fidelity performance, Helios is a 98-qubit trapped-ion quantum computer. Single-qubit gates achieve average infidelities of just 2.5 x 10-5, indicating highly accurate control over individual qubit states. Crucially, two-qubit gate infidelities average 7.9 x 10-4, a key metric for complex quantum algorithms. These low error rates, combined with state preparation/measurement fidelity of 4.8 x 10-4, signify a substantial leap towards scalable, reliable quantum computation.

The reported gate infidelities aren’t fundamentally limited, suggesting further optimization is possible. This is particularly significant for two-qubit gates, often the bottleneck in quantum computation. Helios’s architecture – utilizing barium ions and a novel four-way junction for qubit transport – contributes to these results. The system’s ability to distribute computational resources efficiently, alongside advancements in real-time program compilation, allows for complex program execution and improved overall performance.

These fidelity numbers aren’t simply academic; they directly translate to system-level performance. Helios operates beyond the reach of classical simulation, demonstrating its potential for tackling problems intractable for conventional computers. The low error rates are predictive of successful execution of both random Clifford circuits and random circuit sampling, validating the system’s reliability and showcasing a new frontier in fidelity and complexity for quantum computing hardware.

State Preparation and Measurement Fidelity

State preparation and measurement (SPAM) fidelity is a crucial metric for quantum computers, and Helios achieves impressive results in this area. Specifically, the system demonstrates an average SPAM infidelity of 4.8(6) x 10-4. This means that nearly 99.95% of the time, the quantum state is correctly initialized and read out. Achieving such high fidelity is vital for complex quantum algorithms, as errors in SPAM quickly accumulate and degrade the overall computation.

Single and two-qubit gate fidelities are also key performance indicators. Helios reports average single-qubit gate infidelities of 2.5(1) x 10-5 – exceptionally low error rates. Two-qubit gate infidelities average 7.9(2) x 10-4, demonstrating strong control over qubit interactions. These low error rates, combined with the high SPAM fidelity, indicate a well-controlled system capable of executing complex quantum circuits with minimal accumulated error, pushing beyond the capabilities of classical simulation.

Importantly, the researchers emphasize that these component infidelities—SPAM, single-qubit gates, and two-qubit gates—are not fundamentally limited. This suggests there’s significant potential for further optimization and improvement. The ability to continuously refine these parameters is critical for building larger, more robust quantum computers capable of tackling increasingly complex problems. The achieved performance firmly establishes Helios as a leading quantum computing platform.

Helios Performance: Random Clifford Circuits

Leveraging a unique quantum charge-coupled device (QCCD) architecture, Helios, Quantinuum’s 98-qubit trapped-ion processor, delivers advanced quantum capabilities. Unlike stationary qubit systems, Helios utilizes mobile qubits—barium ions—transported between memory and processing zones. This approach allows for efficient resource sharing and potentially faster scaling, offsetting the complexities of optical control. The system employs a four-way junction to connect these zones, minimizing electrical control complexity compared to earlier designs, and represents a significant step forward in trapped-ion QPU development.

The performance of Helios is characterized by exceptionally low gate errors. Single-qubit gates achieve an average infidelity of 2.5 x 10-5, while two-qubit gates reach 7.9 x 10-4. Critically, state preparation and measurement also exhibit low errors at 4.8 x 10-4. These component infidelities are not fundamentally limited, suggesting further optimization is possible. This high fidelity is vital for complex quantum computations and positions Helios at the forefront of quantum hardware.

These low error rates translate to strong performance in demanding benchmarks. Helios excels in random Clifford circuits, a standard test for quantum processors, and demonstrably surpasses the capabilities of classical simulation. This confirms Helios’s ability to perform computations beyond the reach of current classical computers, establishing a new benchmark for fidelity and complexity in the field. The system’s new software stack facilitates real-time compilation of dynamic programs, further enhancing its capabilities.

Helios Performance: Random Circuit Sampling

Leveraging a quantum charge-coupled device (QCCD) architecture, Helios, Quantinuum’s 98-qubit trapped-ion quantum computer, offers a platform for advanced quantum computation. This design utilizes mobile qubits – barium ions specifically – enabling efficient sharing of resources like lasers and control systems. Unlike stationary qubit approaches, QCCD distributes these resources, reducing complexity as qubit count increases. Helios employs a novel four-way “X” junction to connect memory and processing regions, maintaining scalability without significantly increasing control or fabrication demands.

A key performance metric for Helios lies in its remarkably low gate error rates. Single-qubit gates achieve an average infidelity of just 2.5 x 10-5, while two-qubit gates reach 7.9 x 10-4. State preparation and measurement also show impressive fidelity of 4.8 x 10-4. These component-level infidelities are not fundamentally limited, suggesting further improvements are achievable. These numbers are critical, as they directly translate into robust performance in complex quantum computations.

The low error rates demonstrated by Helios allow it to operate beyond the capabilities of classical simulation. Validation through random circuit sampling confirms this, signifying a new frontier in quantum computing fidelity and complexity. The system’s real-time compilation of dynamic programs, combined with parallelized operations, allows for the execution of truly arbitrary quantum algorithms. Helios represents a significant step toward practical, scalable quantum computation.

Beyond Classical Simulation Capabilities

Operating at 98 qubits, Helios, a trapped-ion quantum computer developed by Quantinuum, represents a significant leap beyond the capabilities of classical simulation. Utilizing barium ions and a novel “X” junction for qubit transport, the system achieves all-to-all connectivity and efficient operation between memory and logic zones. Critically, Helios demonstrates performance exceeding the limits of classical computation, particularly in random circuit sampling – a key benchmark for quantum advantage. This advancement isn’t simply about qubit count, but achieving the fidelity necessary for complex calculations.

The system’s architecture addresses scaling challenges inherent in trapped-ion technology. By employing a mobile qubit design—where ions are physically moved—Helios efficiently shares resources like laser systems, reducing the complexity associated with increasing qubit numbers. This contrasts with stationary-qubit architectures requiring dedicated control systems for each qubit. Helios achieves average single-qubit gate infidelities of 2.5 x 10-5 and two-qubit gate infidelities of 7.9 x 10-4, suggesting high operational precision.

Beyond hardware improvements, Helios features a new real-time compilation software stack. This allows for dynamic program execution and optimizes the control of both qubit transport and quantum operations. The combination of improved fidelity, scalable architecture, and dynamic software control positions Helios at the forefront of quantum computing, paving the way for exploring more complex algorithms and applications currently inaccessible to classical computers.

Frontier of Fidelity and Quantum Complexity

Boasting 98 fully-connected qubits based on barium ions, Quantinuum’s Helios represents a significant leap in trapped-ion quantum computing. This new processor utilizes a quantum charge-coupled device (QCCD) architecture, employing a novel four-way “X” junction to efficiently connect qubit storage and processing regions. Crucially, Helios moves beyond simply having more qubits; it prioritizes maintaining high fidelity while scaling – a key challenge for all quantum modalities. The design aims to distribute computational resources efficiently, sharing expensive hardware and reducing overall system complexity.

Helios demonstrates exceptionally low gate errors, averaging 2.5 x 10-5 for single-qubit gates and 7.9 x 10-4 for two-qubit gates. These error rates aren’t just low in isolation; they predict strong system-level performance in complex quantum computations. The architecture’s performance extends beyond classical simulation capabilities, marking a new threshold in quantum computing complexity. These results demonstrate that Helios isn’t just larger, but demonstrably more capable than previous systems.

The success of Helios stems from a combination of advanced hardware and software. Utilizing barium ions provides a more scalable laser architecture, and the real-time compilation of dynamic programs allows for truly arbitrary quantum algorithm execution. This integration of optimized components and intelligent control unlocks new possibilities for quantum research and application development. The QCCD approach, leveraging qubit transport, offers a viable pathway towards larger, more powerful, and increasingly practical quantum computers.

Quantinuum Helios System Overview

Leveraging the Quantum Charge-Coupled Device (QCCD) architecture, Quantinuum’s Helios system is a 98-qubit trapped-ion quantum computer. Utilizing barium ions (¹³⁷Ba⁺) as qubits, Helios aims for improved scalability and error rates compared to previous ytterbium-based systems. A key innovation is the four-way “X” junction, efficiently connecting qubit storage and processing regions without adding complexity to fabrication or control systems. This design facilitates mobile qubit architectures, allowing shared resource utilization and optimized performance.

Helios boasts all-to-all qubit connectivity via a rotatable ion storage ring. This unique feature, combined with parallelized operations, dramatically speeds up computation. Critically, the system achieves average single-qubit gate infidelities of 2.5 x 10⁻⁵ and two-qubit gate infidelities of 7.9 x 10⁻⁴ – figures not fundamentally limited and showing potential for further improvement. These low error rates are crucial for complex quantum computations.

Beyond hardware, Helios incorporates a new software stack enabling real-time compilation of dynamic programs. This capability allows for flexible and efficient execution of arbitrary quantum algorithms. Demonstrations using random Clifford circuits and random circuit sampling prove Helios surpasses the capabilities of classical simulation, establishing a new benchmark for fidelity and complexity in quantum computing and pushing the boundaries of what’s computationally possible.

Source: https://arxiv.org/pdf/2511.05465

I hope you don't mind my throwing out one more question... Do you know which two-qubit gate they use in their benchmarking tests? I know that at least in the past, prior to Forte IonQ has preferred the Mølmer–Sørensen gate which in terms of just two qubits reduces to an RXX but includes a global rotation. Different modalities often imply different gates but as they have the same basic qubit modality I would assume Quantinuum uses the same.

Anyway, I was impressed by your article. Well organized and detailed, and I don't wish to detract from that. I also realize these are a lot of questions. Perhaps some of them could be addressed in a later article? Regardless, thank you for a highly informative piece on a fascinating and important development.

I am impressed by the simplicity of design and low infidelity numbers. At the same time I find myself wondering about the scalability, particularly as any approach to quantum computing will ultimately require error correction, and depending on one's code the number of physical qubits required for a single logical qubit may number in the tens, hundreds or thousands. Surface codes such as those employed by Google, require more, IBM's Gross bicycle bivariate (288 physical qubits for 12 logical, if memory serves) and Microsoft's Tesseract far less.

In terms of scalability, I remember a neutral atom approach being employed by the Chinese where laser tweezers were being controlled by AI in order to quickly arrange arrays of atoms that numbered about 4000. QuEra is also using lasers to move entire arrays of atoms during computation which may prove useful in the dynamic creation of transversal gates, a key element in error correction codes.

At some point it seems likely that Quantinuum will have to consider me topologies for their tracks even if this violates the principle of all-to-all connectivity. Are there thoughts regarding how those may be arranged? What about how they may be controlled? Might machine learning and intelligence be used to control the logic at runtime? Regardless, another violation of all-to-all connectivity will likely consist of the creation of modules, each with a relatively small number of logical qubits. I remember someone from IBM suggesting only a dozen or so in one talk. Are there thoughts about the coupling?

With such low infidelity numbers the cost of error correction will be quite low but it will increase as a logarithm of the scale and must eventually be accounted for. And in time we will need to scale into the thousands, millions and billions of logical qubits. Does Quantinuum have a planned timeline with specific stages and years in mind?